MIT Technology Review Insights Report Describes the Cultural Foundations of AI Adoption

Posted 1 month ago

34/2026

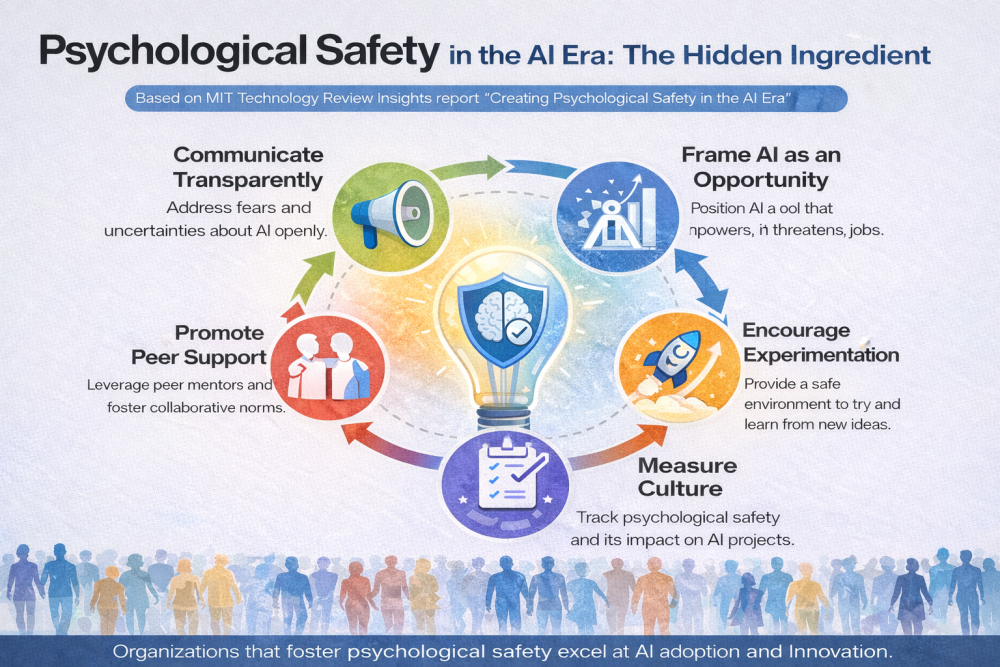

Artificial intelligence is often described as a technological revolution fueled by increasingly advanced algorithms, growing datasets, and immense computational power. However, new evidence indicates that the most important change linked to AI might not be technical but cultural. A recent MIT Technology Review Insights report, ‘Creating Psychological Safety in the AI Era,’ argues that the success or failure of enterprise AI efforts depends less on the technology's maturity and more on the social environment in which it is used. Providing practical ways to assess organizational culture can help leaders identify areas needing change to foster AI success.

The report argues that AI projects rarely fail due to a lack of processing power or poor code. Instead, they struggle when employees work in environments filled with fear. When workplaces foster fear of reputational damage, professional consequences, or marginalization, experimentation drops, dissent is silenced, and honest feedback is limited. This environment discourages questioning assumptions, admitting uncertainty, or acknowledging mistakes during pilot tests, which is detrimental to AI development.

Importantly, the report views fear as a structural barrier to innovation. When personnel see failure as an inherent part of trying new technology and feel they face excessive personal risks, they are less likely to engage fully with AI systems. Environments that foster psychological safety, defined as the shared belief that one can speak honestly without fearing negative consequences, support the risk-taking needed for AI systems to develop and be integrated effectively into organizational workflows, encouraging leaders to prioritize cultural change.

Therefore, the analysis indicates that the main obstacle to enterprise AI success might not be a lack of technology but rather a fragile organizational culture. In companies where fear limits participation, even cutting-edge AI systems may not deliver their expected benefits. On the other hand, when trust and open questioning are embedded in the culture, the human foundation for AI innovation becomes much stronger.

According to the Editor-in-Chief of the HunarNama, "Exploring effective strategies for cultural change can empower organizations to overcome these barriers and realize AI's full potential."